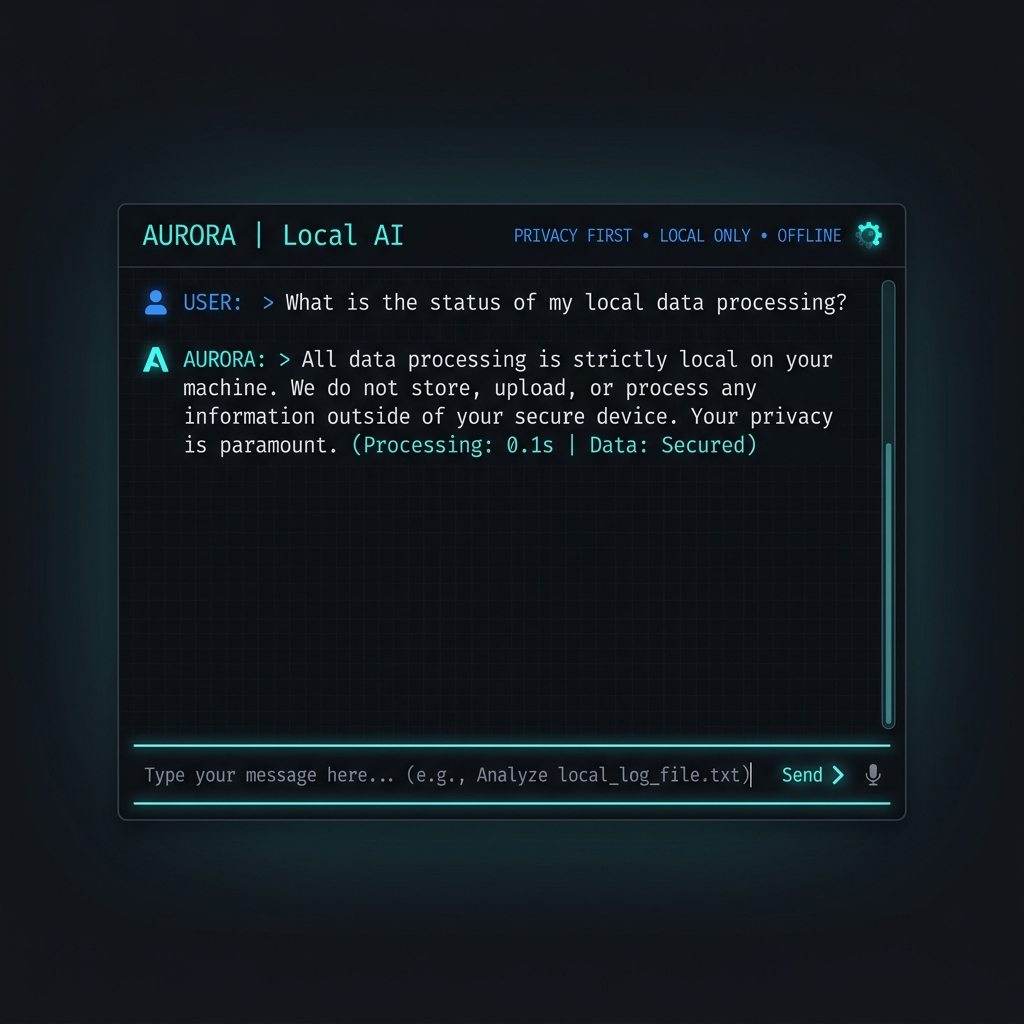

Local Ollama App

A privacy-first AI application that runs entirely on your local machine using Ollama. No data ever leaves your computer, making it ideal for sensitive information.

Core Capabilities

Every starter is pre-configured with industry best practices and deep AINative integrations. Clean, modular, and designed to scale from prototype to production.

100% private and offline

Enterprise-ready implementation with full type safety and observability. Optimized for high-throughput streaming and minimal latency.

Supports Llama 3, Mistral, and more

Optimized for Edge runtimes and serverless environments.

Zero API costs

Secure by default with built-in validation and CSRF protection.

Fast local inference

Deep integration with the AINative Reconciler state system for buttery smooth UI updates and zero-flicker streaming.

Technical Stack

Clean, modular, and designed to scale from prototype to production.

Frontend Layer

Reactive hooks and streaming primitives.

// Same simple hooks, different backend

import { useStream } from "@ainative/react";

export function LocalChat() {

const { messages, handleSubmit } = useStream({

api: "/api/local-chat",

});

// ... rest of UI

}Backend Adapter

Universal protocol handlers for any runtime.

import { OllamaHandler } from "@ainative/server-node";

export const POST = OllamaHandler({

model: "llama3",

host: "http://localhost:11434",

system: "You are a local assistant helping with private tasks."

});