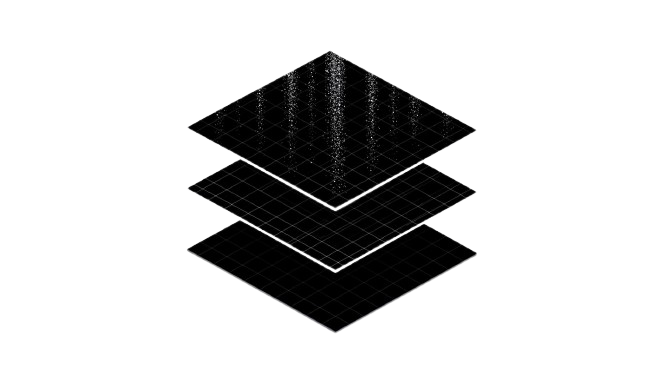

Architecture

A framework, layer by layer.

Every component is documented, swappable, and independently testable. The same protocol runs from your React app down to the model provider.

Layer 01

Client Runtime

Everything that runs in the browser. Pure TypeScript, zero runtime dependencies.

State Manager

Single source of truth for threads, messages, and tool state. Persists to IndexedDB by default.

Event Bus

Typed pub/sub for lifecycle events. Subscribe for analytics, telemetry, or custom UI.

Streaming Engine

Transport-agnostic stream parser with backpressure, retry, and resume.

Reconciler

Computes minimal UI patches from partial AI output. The thing that makes streaming feel native.

Renderer

React bindings for streaming components. Headless-friendly, fully typed.

Layer 02

Server

The protocol bridge between your app and the model providers.

Node (Express)

Drop-in middleware that mounts the AINative protocol on any path.

Python (FastAPI)

Idiomatic FastAPI router with the same wire protocol as the Node adapter.

Edge runtimes

Vercel Edge, Cloudflare Workers, and Deno via the same shared core.

Layer 03

Providers

Pluggable adapters that translate between the AINative protocol and a specific LLM API.

OpenAI

Chat, tools, vision, audio. GPT-4o, o1, and beyond.

Anthropic

Claude models, tool use, prompt caching.

Ollama

Local models. Stream from any host on your network.

Custom

Adapter support is part of the design, so additional providers can be integrated without changing the protocol.

Layer 04

Tool Registry

How the model talks to your code. Type-safe, validated, and observable.

Schemas

JSON Schema or Zod, validated at the boundary.

Execution

Run client-side, server-side, or split across both.

State injection

Tools receive typed app state and a structured response API.

Composition

Tools can call other tools, with shared state and proper tracing.